Transductive Inference

The brilliant, if eccentric and self-congratulatory Vladimir Vapnik has been trumpeting a major shift in the scientific method, and perhaps our epistemological stance, over the past few years. Whether or not Vapnik gets his revolution, at the very I least I'll wager you will see "transductive inference" gain increasing attention as his ideas trickle out from statistical learning theory to other intellectual fields. So what's it all about?

The goal of science (it can be argued) is the accurate prediction of future or novel events. Since the days of Aristotle, and especially since Bacon, the essential means of scientific inference is induction. Bearing Hume's warnings in mind, we generally follow this familiar process:

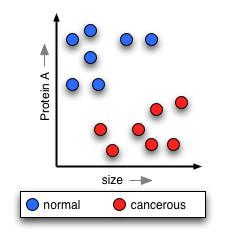

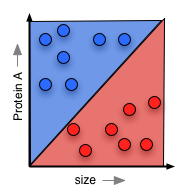

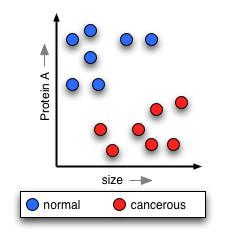

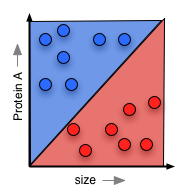

Now, if you're doing normal scientific induction, you'll look at this training data and try to posit a simple rule that will explain the data, and help you understand nature's "hidden rule" that makes some cells cancerous and others not. In classical statistics, this means you come up with a function that will "paint" part of the surface red, and part blue. This paint forms your prediction about any cell that lands in each region:

Now, if you're doing normal scientific induction, you'll look at this training data and try to posit a simple rule that will explain the data, and help you understand nature's "hidden rule" that makes some cells cancerous and others not. In classical statistics, this means you come up with a function that will "paint" part of the surface red, and part blue. This paint forms your prediction about any cell that lands in each region:

Vapnik helped found the field of computational learning theory, in which one takes a slightly different approach. Rather than trying to guess nature's "hidden rule", you worry solely about minimizing the error your function will have when you test it against more liver cells. The surface-painting you come up with might not be parsimonious or a sensible guess about what nature is doing, but if it is a successful predictor, that's fine.

Now comes the upheaval that is transductive reasoning. Vapnik has established mathematically that you pay a certain price in the accuracy of your predictions by generalizing to pain the entire surface either red or blue. So his idea is this: rather than first doing induction to posit a general rule, then making predictions about new liver cells as you see them, you simply transduce to make a prediction about each new cell as you see it, based on everything you've seen before. You don't get a simple rule that you can explain or write down -- all you get is a prediction each time. Vapnik has demonstrated that transduction will always perform better than induction on a given problem.

So this leaves us with this abbreviated scientific method, in which we:

Vapnik helped found the field of computational learning theory, in which one takes a slightly different approach. Rather than trying to guess nature's "hidden rule", you worry solely about minimizing the error your function will have when you test it against more liver cells. The surface-painting you come up with might not be parsimonious or a sensible guess about what nature is doing, but if it is a successful predictor, that's fine.

Now comes the upheaval that is transductive reasoning. Vapnik has established mathematically that you pay a certain price in the accuracy of your predictions by generalizing to pain the entire surface either red or blue. So his idea is this: rather than first doing induction to posit a general rule, then making predictions about new liver cells as you see them, you simply transduce to make a prediction about each new cell as you see it, based on everything you've seen before. You don't get a simple rule that you can explain or write down -- all you get is a prediction each time. Vapnik has demonstrated that transduction will always perform better than induction on a given problem.

So this leaves us with this abbreviated scientific method, in which we:

Thus far, the potential impact of transduction has only begun to make an impression on the philosophical community. I haven't found any discussion of it in the philosophy of science, but that could be because I don't understand the current problems and arguments in that field. Gilbert Harman, a former professor of mine, is making an intriguing application of transduction to moral reasoning in a paper to be published later in 2005 (RTF, HTML). Essentially, Harman asks whether, if transduction can offer superior classification, we shouldn't attempt to use transduction to "classify" moral actions into "should do" and "shouldn't do." We would sacrifice the formation of inducing general moral principles which we could elaborate and trasmit, but we would (presumably) gain "better" moral decisions. Is it worth giving up comprehensible theories for better predictions? Will we see transductive inference gain a foothold in economics, finance, the social sciences? It's one to watch.

- Make a number of observations

- Induce a general law (or mathematical function) that we think is generating the phenomenon.

- Use the law to make predictions about future phenomena.

Now, if you're doing normal scientific induction, you'll look at this training data and try to posit a simple rule that will explain the data, and help you understand nature's "hidden rule" that makes some cells cancerous and others not. In classical statistics, this means you come up with a function that will "paint" part of the surface red, and part blue. This paint forms your prediction about any cell that lands in each region:

Now, if you're doing normal scientific induction, you'll look at this training data and try to posit a simple rule that will explain the data, and help you understand nature's "hidden rule" that makes some cells cancerous and others not. In classical statistics, this means you come up with a function that will "paint" part of the surface red, and part blue. This paint forms your prediction about any cell that lands in each region:

Vapnik helped found the field of computational learning theory, in which one takes a slightly different approach. Rather than trying to guess nature's "hidden rule", you worry solely about minimizing the error your function will have when you test it against more liver cells. The surface-painting you come up with might not be parsimonious or a sensible guess about what nature is doing, but if it is a successful predictor, that's fine.

Now comes the upheaval that is transductive reasoning. Vapnik has established mathematically that you pay a certain price in the accuracy of your predictions by generalizing to pain the entire surface either red or blue. So his idea is this: rather than first doing induction to posit a general rule, then making predictions about new liver cells as you see them, you simply transduce to make a prediction about each new cell as you see it, based on everything you've seen before. You don't get a simple rule that you can explain or write down -- all you get is a prediction each time. Vapnik has demonstrated that transduction will always perform better than induction on a given problem.

So this leaves us with this abbreviated scientific method, in which we:

Vapnik helped found the field of computational learning theory, in which one takes a slightly different approach. Rather than trying to guess nature's "hidden rule", you worry solely about minimizing the error your function will have when you test it against more liver cells. The surface-painting you come up with might not be parsimonious or a sensible guess about what nature is doing, but if it is a successful predictor, that's fine.

Now comes the upheaval that is transductive reasoning. Vapnik has established mathematically that you pay a certain price in the accuracy of your predictions by generalizing to pain the entire surface either red or blue. So his idea is this: rather than first doing induction to posit a general rule, then making predictions about new liver cells as you see them, you simply transduce to make a prediction about each new cell as you see it, based on everything you've seen before. You don't get a simple rule that you can explain or write down -- all you get is a prediction each time. Vapnik has demonstrated that transduction will always perform better than induction on a given problem.

So this leaves us with this abbreviated scientific method, in which we:

- Make a number of observations

- Use transduction to make predictions about new phenomena as we encounter them.

Thus far, the potential impact of transduction has only begun to make an impression on the philosophical community. I haven't found any discussion of it in the philosophy of science, but that could be because I don't understand the current problems and arguments in that field. Gilbert Harman, a former professor of mine, is making an intriguing application of transduction to moral reasoning in a paper to be published later in 2005 (RTF, HTML). Essentially, Harman asks whether, if transduction can offer superior classification, we shouldn't attempt to use transduction to "classify" moral actions into "should do" and "shouldn't do." We would sacrifice the formation of inducing general moral principles which we could elaborate and trasmit, but we would (presumably) gain "better" moral decisions. Is it worth giving up comprehensible theories for better predictions? Will we see transductive inference gain a foothold in economics, finance, the social sciences? It's one to watch.

1 Comments:

So many blogs and only 10 numbers to rate them. I'll have to give you a 7 because you have good content but lack of quality posts.

Free Access To More Information Aboutinternet anbieter

Post a Comment

<< Home